In October 2018 I did a talk at SearchLove called: Creativity, Crystal Balls & Eating Ground Glass. It was a bit different to the talks that I usually give, in that it was largely based on a thought experiment. At that point I’d been responsible for creating content which generates coverage and links from journalists for several years. Towards the end of 2017 someone asked me:

How good are you at predicting the success (or otherwise) of a piece?

I realised I didn’t have a great answer, (I thought I was good, but I had no evidence to support that thinking), and so, in 2018 I resolved to make a prediction about how each piece would perform ahead of launch. Within the talk I shared my predictions (which to be fair, I think were only somewhat interesting); but I also shared what I learned along the way (which I thought was way more interesting).

I’ve been meaning to write up this deck for a long time, partially because there’s only so much stuff you can squeeze into a 30 minute talk; but also because some of the stuff I learned subsequently, I’ve never shared.

This is likely to be a long old post, so you might want to grab a coffee (or similar) – I hope you’ll find it useful and/or interesting.

How I made those predictions:

Predicting the success or otherwise of a creative piece is not strictly binary, and so, I created the following scale:

| Scoring Band | LinkScore Points | Equivalent number of links |

| A | 10,000+ | More than 100 |

| B | 5,000 – 9,999 | 50 – 99 |

| C | 2,000 – 4,999 | 20 – 49 |

| D | 1,000 – 1,999 | 10 – 19 |

| E | Less than 1,000 | Less than 10 |

LinkScore is a proprietary metric created and used by Verve. My original predictions were based solely on LinkScore, the equivalent number of links column is just to give you sense of what that means (i.e. an average link is not worth 100 points).

Ahead of launch I predicted the scoring band of each campaign.

In the event that a campaign launched without me making a prediction (this happened a couple of times) I did not make a prediction. I did this because felt that it wouldn’t be a true reflection of my thoughts (i.e. my predictions would be influenced by how the campaign was performing).

It’s probably worth noting here that I made all of these predictions without telling my team what I was up to. I just saved the predictions in a google document and didn’t think too much about them. Moreover, I didn’t analyse how I was faring until I came to write the talk.

That’s cute Hannah, but HOW did you decide what band to put each campaign into?

This was the question fired at me from someone on my team when I presented a draft version of the SearchLove deck internally at Verve.

It’s an excellent question.

But it’s one that difficult to answer.

Deconstructing my own thought processes is something I struggle with. Here are the things I think I considered when scoring each campaign:

Resonance

resonance = the power to evoke emotion

Initially I might consider resonance at a topic level:

In this example you can see that 35 times the number of articles are written about AI vs RPA, and these articles get 8 times more engagement. This would lead me to conclude that AI is a more resonant topic than RPA.

But I also think about this stuff at a human level too: how many people are likely to care about this? Or, how many people are likely to be touched in some way by this?

Breadth of Appeal

Here I’m thinking about things like:

- How many publications are likely to cover this?

- Can we sell it in to different verticals?

- Different countries?

- Can we use the piece to tell a variety of stories?

Past Experience

This is really hard to deconstruct – essentially here I’m thinking about how I’ve seen similar pieces perform before. I’ll talk more about this a bit later on in the post.

How accurate were my predictions?

The short answer is: not very accurate at all.

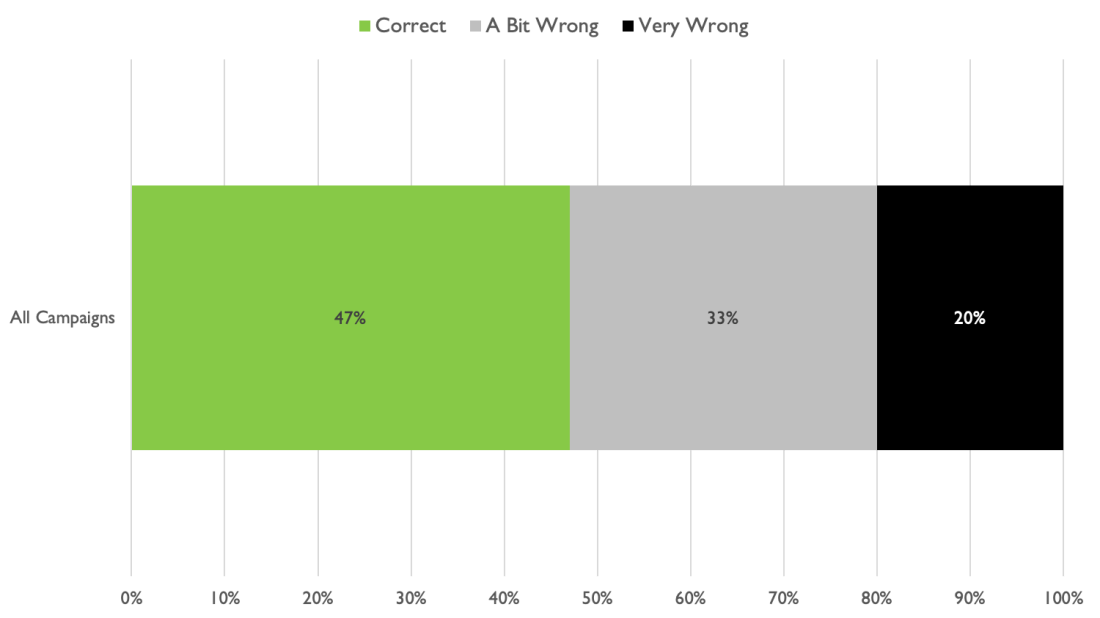

I correctly predicted the LinkScore band for just 47% of campaigns

This means I am wrong more often than I am right.

This was pretty shocking – I really thought that I’d be better at making predictions that that. I was interested to see how wrong I was – remember I was scoring campaigns based on a scale, and so I wanted to see how far off my predictions were.

I decided to bucket the predictions which were wrong as follows:

A bit wrong = plus or minus one scoring band e.g. I predicted band “A” (100+ links); but the campaign actually achieved band “B” (50 – 99 links).

Very wrong = plus or minus two or more scoring bands e.g. I predicted band “A” (100+ links); but the campaign actually achieved band “C” (20 – 49 links).

Here’s how I fared across all campaigns:

In addition to making accurate predictions about just 47% of our campaigns; I was very wrong about 1 in 5.

I did not feel good about this at all.

Right now I suspect some of you might be wondering why on earth you’re wasting your time reading a post from a human who clearly has little to no idea what she’s doing.

Shaken as I was to discover just how poor I was at making these predictions, I learned some important lessons as a result of doing this analysis.

What I learned…

Given my predictions really weren’t accurate I was keen to understand where my thinking had gone awry.

Can I predict a ‘winner’?

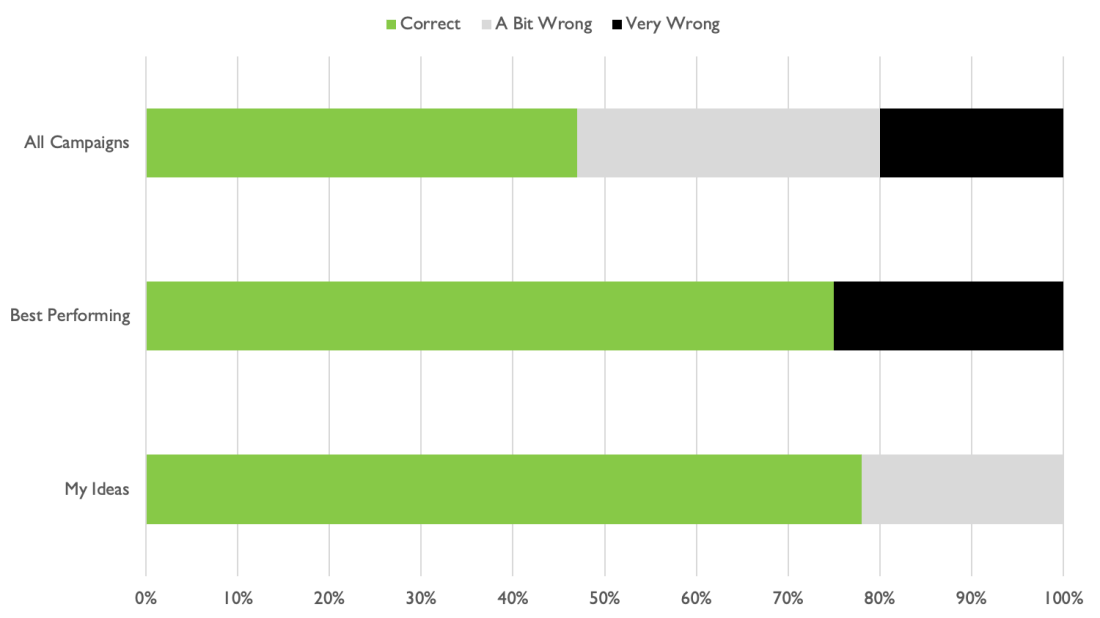

First up, I looked at how accurate my predictions were for our best performing campaigns – i.e. the campaigns which achieved either an “A” band (100+ links) or “B” band (50 – 99 links).

I did a little bit better here, accurately predicting the the band for 75% of our best performing campaigns; however, when I was wrong, I was very wrong (out by two or more scoring bands):

In order to better understand what was causing my predictions to be so far off, I looked at one of the campaigns I’d predicted outrageously wrongly:

This is On Location, a piece we created for GoCompare. Using 20 years of IMDb data, we calculated the most filmed locations on the planet.

I predicted this piece would achieve band “E” (less than 10 links); however, in fact, this was a band “A” campaign which generated over 390 links.

So I was about as wrong as a person can be.

What was my problem? Remember I said that think I considered three things when trying make these predictions? I’ll deal with them each in turn:

Resonance

Have you ever wondered what the most filmed locations on earth are?

It’s definitely not a question that keeps me awake at night, and I’m not sure I can honestly say it’s something I’ve ever wondered.

But what if someone posed the question to you: Do you know what the most filmed location on earth is? Is it something you’re curious to know the answer to? For me, it sparked a little idle curiosity, but not much more.

What about the resonance of filming locations as a topic?

Based on these numbers I guess it’s somewhat resonant; but ultimately when it came down to it I was pretty much on the fence.

Breadth of Appeal

I was concerned when it came to breadth of appeal too: I didn’t feel that the results were surprising, (Central Park tops the list) and, as such, I felt that the piece would have limited appeal:

I was *really* wrong here (more on this later).

Past Experience

I suspect that it was some pretty lazy thinking about past experiences that really led me awry here. I said above that I didn’t think the results were surprising, and so, I felt that the piece would have limited appeal.

I think I was hoping for a result like this – Guardians of the Galaxy is the film which has the highest on-screen death count (by quite some margin):

Or possibly a result like this – Axl Rose has a greater vocal range than Mariah Carey:

What was going on for me here?

I think that when you create a lot of pieces like this, you create a sort of mental shorthand to categorise them. This can either be helpful or harmful depending on how lazy your thinking is.

Somewhere along the line, in my head, On Location had become “Director’s Cut for filming locations”.

As such, in order to succeed what we needed was a surprising winner.

But the data did not support that.

Actually, the piece was not “Director’s Cut for filming locations”; it was much more like another piece we’d created:

In Unicorn League we set out to uncover what these $1bn companies and the people who founded them have in common.

This piece tells you exactly what you would expect – more unicorn founders attended Stanford than any other institution:

This is not surprising. It’s something that many people would suspect to be the case. What’s compelling is that we’ve proved that it’s true.

The piece is not “Director’s Cut for filming locations”, it’s closer to “Unicorn League for filming locations”. The results aren’t surprising, but journalists covered it because we’ve proved it.

This very lazy shorthand of mine caused me to miss the potential for breadth of appeal for On Location too.

Something you may or may not be aware of, is that when you create a piece with a very surprising result (like Director’s Cut or Vocal Ranges), you have a degree of breadth of appeal because lots of journalists will write up the story; however, you don’t have true breadth, because there’s only one story – the big surprising result. You get lots of coverage, but it’s essentially the same story being written over and over again.

You don’t have multiple stories.

Conversely, with a piece like Unicorn League (and, as it would transpire with On Location), you don’t have a single story – you have many stories.

For Unicorn League we got a bunch of coverage with the most popular university angle. But there were tonnes of other angles in the piece which allowed journalists to tell multiple stories.

By thinking about On Location as “Director’s Cut for filming locations” I completely neglected to think about the other potential stories buried within the data.

Fortunately a few members of the team at Verve were smart enough to recognise the flaw in my thinking, and convinced me that we should definitely create and launch the piece.

I am very grateful that they did, however, when it came to my predictions, I was obviously still really holding on to those misconceptions.

Ultimately, they were right, and I was wrong.

On Location had huge breadth of appeal, and the data we had allowed us to pitch in multiple stories.

We had topical angles for Valentine’s Day:

Niche angles:

And many, many local angles:

I don’t want to bore you to tears with tonnes of examples here, but we generated angles for various countries, every US state, and a bunch of cities.

So, it would appear that some lazy thinking about past experiences cause my predictions to be wildly off, but I wondered what else might affect my judgement.

Do I fall in love with my own ideas?

What if the campaign was my idea? Would I make predictions which were overly-optimistic?

I was relieved to find that actually, my predictions about my own ideas were the most accurate, I correctly predicted the scoring band for 78% of my own campaigns ideas, and the remaining 22% were only wrong by one band:

For what it’s worth, I suspect that if I’d done this exercise earlier in my career, I would definitely not have made such accurate predictions. Falling in love with your own ideas is a danger, and I suspect I’ve trained myself out of it.

Where am I going wrong?

So, it looks like I’m able to make pretty accurate predictions about my own ideas (I’m right 78% of the time), and 75% of the time I’m able to recognise a high performing campaign… So where am I going wrong?

I decided to cut the data again. This time I split the campaigns into ideas I loved, and those I didn’t.

I recognise that this might be tricky to parse, but a high prediction from me, doesn’t necessarily mean that I love the idea.

Here’s a good example of that: in this piece, we revealed the most congested roads in the UK:

This was my idea. I was confident that it would work, because in the course of my research I’d spotted that automotive journalists frequently covered stories around this topic, and that other similar pieces had performed well:

I felt that it was a resonant topic, because pretty much everyone gets annoyed about being stuck in traffic. I also felt that it had reasonable breadth of appeal because we could create stories for both UK nationals & regionals.

My prediction for this piece was band “A” (100+ links), and it actually got over 300 links. So I was confident it would work and it did.

But do I love the idea? Honestly? No I don’t.

So, what happens to my predictions when I love the idea?

If I love the idea my predictions are only accurate 36% of the time

Also, if I love the idea my predictions are always high as opposed to low.

If I don’t love the idea, my predictions are accurate 71% of the time, (and when I’m wrong I’m only a bit wrong):

Loving an idea is apparently still a problem for me.

But, as the chart above shows, I don’t fall in love my own ideas. I fall in love with other peoples.

This is a really valuable thing for me to know.

If I know I have a tendency to fall in love with other peoples ideas (and that when I do so it affects my judgement), I know where I need to do more work, and be more thoughtful and considered in evaluating ideas.

So I feel like I learned an important lesson here; but there was also a bigger, more scary one than this…

I may not be predicting the future, I might actually be affecting it

This is Demolishing Modernism, a tribute to the buildings we’ve lost to the wrecking ball:

I accurately predicted the scoring band for this campaign.

But that’s not the whole story. Two months post-launch, here’s where we were at – just 1,000 LinkScore points:

On the 17th May, I had a line manager meeting with Matt, who was outreaching the campaign. In his notes I made this comment:

Keep going with Demolishing Modernism to see if we can get a little more with this one.

Look what happened in June:

I don’t think I did this deliberately in order to make my predictions correct. But I can’t help but feel like my own belief or bias affected the outcome here.

What if my prediction was different? If I’d predicted around 1,000 LinkScore points would I have been happy stopping here?

I deliberately did not share my predictions with the team (in fact, they didn’t even know that I was making predictions), but I can’t help but wonder if I was unconsciously influencing them nevertheless.

I feel like there’s possibly a bigger learning here too which Wil Reynolds picked up on: to what extent do our biases unconsciously affect our decisions and judgement?

This doesn’t just apply to creative pieces – it’s entirely possible that the unconscious thoughts or beliefs we have about any type of work we do could be affecting (either positively or negatively) the results we achieve.

But still, there’s more…

As you can probably tell, putting together this talk was a really introspective exercise for me.

I was really surprised at some of the scores I’d given particular creative pieces. There were many successful pieces that I was shocked to see that I had scored so low.

My memory it seems, is incredibly unreliable.

Perhaps this isn’t a surprise to you. I’m sure that you too, have put something in a ‘safe’ place, and then completely forgotten where that ‘safe’ place is. Most people would accept that they have a poor memory for that sort of thing.

But what about past events?

Most people think that they’re able to accurately recall things that happened in the past and how they were feeling at the time.

However, neuroscientists have discovered that each time we remember something, we reconstruct the event – essentially we reassemble it, based on what we now know.

As such, it’s incredibly difficult for me to really remember how I felt about a particular piece prior to it launching – I may think that I always thought it would be successful, but am I just reconstructing my memory based on what I know happened post-launch?

Additionally, psychologists have noted that we suppress memories that are painful or damaging to our self-esteem.

It’s my job to make accurate judgements about the likely success or failure of creative pieces. It would be damaging to my self-esteem to recall instances where I was wrong, so it’s conceivable that I would suppress memories like that; or I might unconsciously reconstruct, or reassemble my memory to create a version of history where I always thought a particular campaign would be successful.

To some extent I was already aware of this phenomenon. I frequently speak or write about content we’ve created, and I’ve been surprised in the past when I’ve spoken to the various people involved in a project, and noted that their memories of the events that transpired in the course of creating and outreaching a piece vary wildly.

But somehow I think I thought I was immune – that my memories of how things transpired were reliable. They definitely aren’t.

But perhaps the biggest and most important lesson I learned was this – no matter which way I twist or turn it, or crunch those numbers:

My judgement is considerably worse than I thought.

I think that this is really powerful thing to learn. Over time, if I can recognise the situations in which my judgement is bad, I might be able to adapt and make better decisions.

Final thoughts…

I believe that getting things right is more important than being right.

This exercise proved to me that it’s really important to listen to other people, and let them try things out – even if you’re not convinced that those things will work.

I think this is important on two counts:

Firstly because, if things do go wrong, you’re giving people the opportunity to learn something.

Telling people not to do stuff because you think it won’t work, doesn’t teach people anything. I suspect that often it will just lead them to resent you.

I think that often when people do stuff like this their intentions are good – they are trying to protect others from failure. But you only fail when you stop. If you try something that doesn’t work, and then you try something else instead (which does work), it’s not a failure, it’s just a hiccup.

Moreover, when you prevent people from trying things out, you’re actively stopping them from learning and developing. If you’re not allowed to make mistakes you can’t learn anything.

The second reason why I think this is important, is this:

it’s likely that you know a lot less than you think

Looking back there have been countless times that members of my team have tried things out that I was certain wouldn’t work. Sometimes they were big things, campaigns like On Location which I thought would get less than 10 links, but actually generated over 390. Sometimes those things were smaller, like an outreach angle or a different way of approaching a journalist.

But I let them try out those things anyway.

And I’m really glad that I did.

Stopping them from doing those things would have been a huge error, because a whole bunch of those things worked out just great.

It occurs to me that perhaps we all know a lot less than we think.

And that all of us make pretty bad judgement calls more often than we think.

This might sound like a potentially fatal flaw, but I suspect that this only becomes truly problematic when we fail to acknowledge that this is the case.